Fraud in SaaS payment systems is evolving fast, costing consumers billions annually. Traditional methods can't keep up with threats like subscription fraud, account takeovers, and free trial abuse. AI-powered models now detect fraud in milliseconds by analyzing behaviors, anomalies, and transaction data. Here's how these models are reshaping fraud prevention:

- Behavioral Biometrics: Tracks typing speed, mouse movements, and device usage to identify bots or fraudsters.

- Anomaly Detection: Flags unusual patterns like unexpected login locations or transaction amounts.

- Classification Models: Uses historical data to label transactions as legitimate or fraudulent.

Advanced techniques like Graph Neural Networks (GNNs) and Deep Learning uncover fraud rings and time-sensitive patterns. Combined with Champion-Challenger frameworks, these systems continuously improve and adapt to new tactics.

AI systems reduce fraud by up to 38%, cut false positives by 70%, and process decisions in under 200 milliseconds. For SaaS businesses, this means securing revenue and improving user trust while staying ahead of sophisticated fraud schemes.

Core AI Models for Real-Time Fraud Detection

Combatting fraud in SaaS payment systems relies heavily on three key AI models, each designed to analyze specific signals and quickly identify risks. These models work together to provide a robust defense against fraudulent activities.

Behavioral Biometrics Models focus on how users interact with a platform by monitoring factors like typing speed, mouse movements, navigation habits, and device usage. By creating unique behavioral signatures, these models can detect when an account is being operated by an automated system or a fraudster. For example, human interactions tend to show natural variations, while bots often operate with precise, repetitive timing. This layer of behavioral analysis adds depth to other detection methods.

Anomaly Detection Models leverage unsupervised learning to spot irregularities without needing pre-labeled fraud data. These models establish norms for transaction amounts, login times, and user locations, flagging deviations that might signal fraud. They’re particularly adept at identifying zero-day attacks - new threats that haven’t been encountered before. For instance, if a user typically logs in from Chicago but suddenly initiates a transaction from Moscow, the system can flag this behavior as suspicious. Platforms like Trackly have achieved an impressive 98.1% accuracy in detecting anomalies on synthetic datasets, showcasing the importance of this approach in identifying unusual patterns.

Classification Models use supervised learning to sort transactions as either legitimate or fraudulent based on historical data. Techniques like Random Forest and Deep Neural Networks analyze a wide range of variables, including transaction amounts, merchant categories, device fingerprints, and IP addresses, to uncover patterns linked to known fraud cases. These models are incredibly fast, processing millions of signals in just milliseconds. A Stripe engineer described this precision-driven process:

"I obsess about a single moment that lasts just a fraction of a second. It begins when someone clicks 'purchase,' and it ends when their transaction is confirmed."

To enhance accuracy, many systems combine these models into ensemble frameworks, producing a fraud score (ranging from 0 to 100) in under 100 milliseconds. This score helps decide whether a transaction is approved, flagged for additional verification, or blocked outright - all while ensuring users experience minimal friction. These foundational models set the stage for even more advanced AI techniques to tackle fraud effectively.

sbb-itb-e3aed85

Advanced AI Techniques for Fraud Detection

SaaS platforms are stepping up their game in fraud detection by incorporating advanced AI techniques. These methods go beyond standard models, creating a layered defense to identify fraudulent activity in real-time.

Graph Neural Networks Models

Graph Neural Networks (GNNs) are reshaping how fraud detection systems analyze payment data. Instead of treating transactions as isolated events, GNNs explore the relationships between accounts, devices, IP addresses, and merchants. This approach is particularly effective in uncovering fraud rings and coordinated schemes.

The strength of GNNs lies in their "neighborhood aggregation" capability. They gather data from connected nodes, allowing them to assess risks beyond direct interactions. As Naim from NVIDIA's RAPIDS cuGraph team explains:

"GNNs consider accounts, transactions, and devices as interconnected nodes - uncovering suspicious patterns across the entire network."

The results are impressive. For example, when tested with the TabFormer dataset, a GNN-enhanced XGBoost model achieved an AUPRC of 0.9, outperforming the standard XGBoost model's 0.79. A UK bank, after switching to a Graph Transformer for detecting money muling, saw a 47% improvement in £-weighted recall at the same precision level. Similarly, a Brazilian neobank using Graph Transformers reported a 25% boost in weighted PRAUC while maintaining sub-100 millisecond latency for thousands of queries per second.

GNNs excel at identifying network-wide fraud patterns that simpler models often miss. By using graph convolution layers, they can trace complex transaction chains designed to evade detection. As Kumo.ai puts it:

"Fraud doesn't occur in isolation but spreads through connections such as shared devices, linked merchants, and coordinated transaction networks. Graph learning captures these connections directly, revealing coordinated fraud patterns that row-based models overlook."

For SaaS platforms processing high transaction volumes, GNNs offer significant efficiency gains. By leveraging optimized frameworks, platforms can achieve up to 39x faster data preprocessing and 5.63x faster model training compared to CPU-based systems. Many platforms now combine GNNs with tools like XGBoost, where GNNs create "node embeddings" (mathematical representations of network context) that are used for real-time fraud scoring.

| Feature | Traditional AI Models | Graph Neural Networks |

|---|---|---|

| Data Focus | Individual transaction features | Relationships and network topology |

| Fraud Detection | Isolated anomalies | Coordinated fraud rings and collusion |

| Feature Engineering | Manual effort required | Learns structural features automatically |

| Context Range | Limited to immediate data | Extends to multiple "hops" in the network |

| Explainability | High (decision trees/coefficients) | Moderate to low (requires specialized tools) |

Deep Learning and Neural Networks Models

While GNNs focus on relationships, deep learning models excel at detecting patterns in time-sensitive and streaming data. These architectures, like Transformers and C2GAT, automate fraud detection without relying on manual feature engineering. They directly analyze raw transaction data to identify suspicious behavior.

One standout feature of these models is their ability to capture temporal dynamics - the timing and sequence of events that might indicate fraud. For instance, a sudden burst of late-night transactions could be harmless for one user but highly suspicious for another. Continuous-time models encode timestamps to detect these patterns effectively. As noted in Frontiers in Artificial Intelligence:

"Rule-based pipelines cannot fully model the continuously evolving graph topology and timing of transactions."

The performance improvements are striking. For example, a Coupled Modular Simplicial Graph Neural Network (CMSGNN-SAO) achieved 99.5% accuracy and 99.1% precision in credit card fraud detection. Advanced systems have reduced the time to detect fraud by over 60% while increasing detection accuracy by 25%.

Pre-authorization screening is another major advantage. By blocking fraudulent transactions before approval, these models not only prevent losses but also enhance customer experience. In January 2025, Signifyd reported that a major electronics retailer increased its conversion rate by 200 basis points after adopting AI-driven pre-authorization fraud screening.

Deep learning models also adapt quickly to new fraud tactics, even before chargeback data becomes available - a crucial capability since chargebacks can take up to 90 days to process. Aerospike highlights the speed advantage:

"The system won't just react faster; it will constrain fraudsters' time horizon to milliseconds."

Champion-Challenger Machine Learning Models

To keep fraud detection systems sharp, SaaS platforms use Champion-Challenger frameworks. This approach continuously tests multiple models simultaneously. The "Champion" is the current top-performing model, while "Challengers" are newer models that compete to replace it.

Challenger models operate in shadow mode, scoring live data without affecting actual transactions. Statistical A/B testing determines whether a challenger outperforms the champion before it goes live. This setup allows platforms to validate improvements without risking customer trust.

Fraud models degrade over time as fraudsters adapt. Without retraining, precision can drop from 90% to 70-75% within 12-18 months. As Bottomline emphasizes:

"It's never quite good enough... fraud systems are far too often put in place and then trusted to consistently deliver results without any monitoring or adjustment."

Implementing this framework requires robust infrastructure, such as AWS EMR and S3, to handle large-scale data processing and monitor "concept drift" - when fraud patterns evolve and reduce model accuracy. The rewards are substantial: advanced ML models can cut fraud losses by 40-60% and reduce false positives by 50-60%.

Reducing false positives is especially critical. Around 15% of customers who experience three or more false declines switch to competitors. As Ryan Aminollahi explains:

"The goal isn't to approve everything. It's to approve the right things fast. ML helps you get there."

Champion-Challenger frameworks also enable continuous learning. Models automatically retrain on confirmed fraud cases and false positives, maintaining accuracy as threats evolve. This setup allows platforms to process 100x more transactions per analyst compared to manual review teams.

Implementing AI Models in SaaS Payment Systems

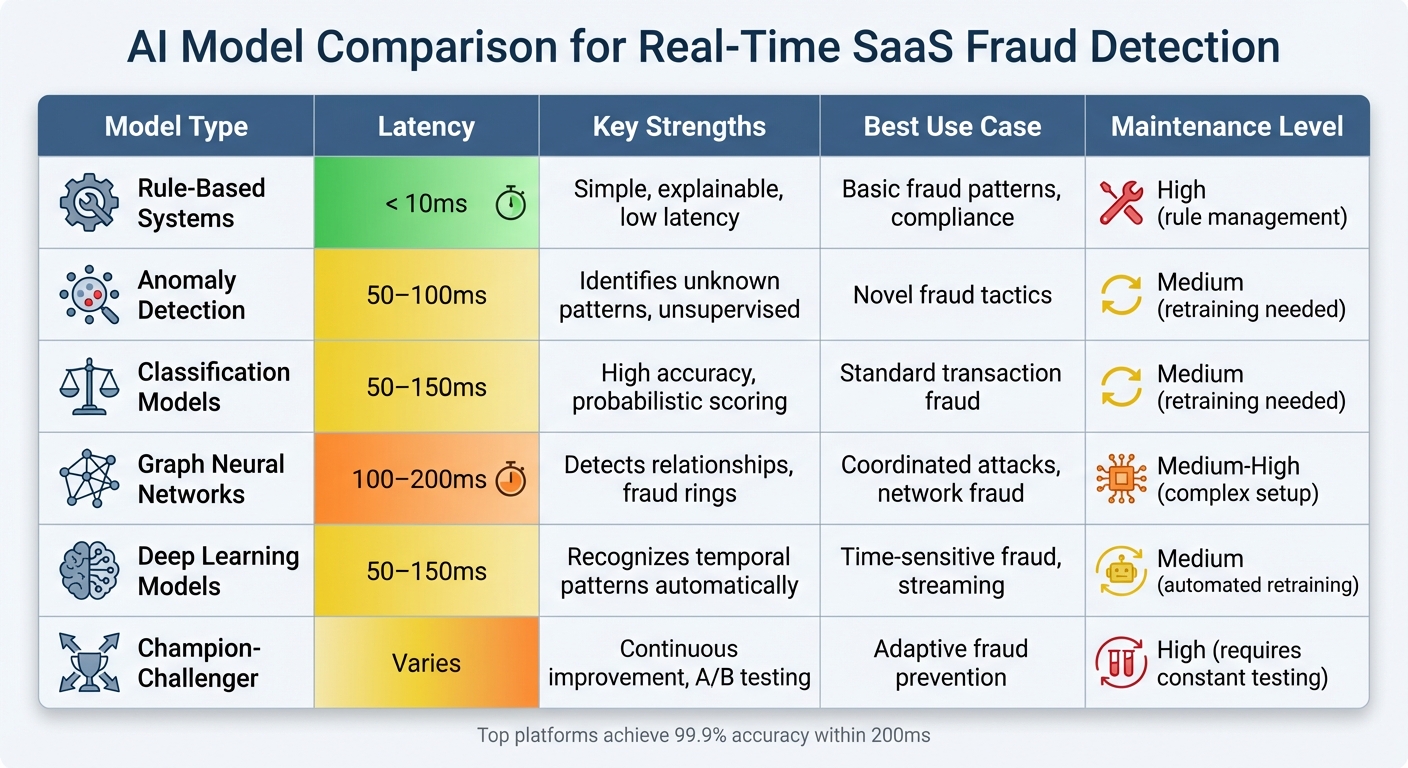

AI Model Comparison for Real-Time SaaS Fraud Detection

Model Deployment Best Practices

When deploying AI models for fraud detection in real-time payment systems, balancing speed, accuracy, and reliability is key. These systems operate within a 50–200ms latency budget for the entire fraud detection process, so every millisecond matters.

To ensure smooth operations, set the machine learning model inference timeout to 30ms. If a model times out or a data source fails, the system should automatically revert to rules-based scoring instead of skipping the fraud check altogether. To save time, fetch critical data - like transaction history, device fingerprints, and geolocation - simultaneously rather than sequentially. Tools like OpenTelemetry can help you monitor p99 inference latency and trace the entire pipeline from feature extraction to post-processing. If timeout rates exceed 1%, it’s a sign to optimize the model serving layer to reduce reliance on fallback mechanisms.

A great example of this in action is FreshBooks' integration of Stripe Connect and Radar in early 2024. Under the leadership of Andrew Gunner, Senior Director of Product for Payments, the platform used AI-driven risk scores and identity verification during merchant onboarding. This approach blocked more than 300 fraudulent accounts within just three months. As Gunner noted:

"This tooling has significantly increased the efficiency of our risk team to focus efforts where manual intervention is truly needed, and has allowed us to block more than 300 fraudulent accounts from onboarding onto our platform in just 3 months."

To strengthen fraud prevention, combine machine learning models with rule-based velocity checks, such as flagging accounts with more than 10 transactions in 5 minutes. For sensitive data like card numbers, use hashed values to maintain data privacy and ensure PCI compliance while still enabling identity matching. Models should aim for high recall to catch as much fraud as possible while maintaining enough precision to avoid frustrating legitimate users with false positives. These practices allow SaaS platforms to leverage the full potential of AI in fraud detection.

AI Model Comparison Table

Here’s a quick overview of different AI model types and their trade-offs:

| Model Type | Latency | Key Strengths | Best Use Case | Maintenance Level |

|---|---|---|---|---|

| Rule-Based Systems | < 10ms | Simple, explainable, low latency | Basic fraud patterns, compliance | High (rule management) |

| Anomaly Detection | 50–100ms | Identifies unknown patterns, unsupervised | Novel fraud tactics | Medium (retraining needed) |

| Classification Models | 50–150ms | High accuracy, probabilistic scoring | Standard transaction fraud | Medium (retraining needed) |

| Graph Neural Networks | 100–200ms | Detects relationships, fraud rings | Coordinated attacks, network fraud | Medium-High (complex setup) |

| Deep Learning Models | 50–150ms | Recognizes temporal patterns automatically | Time-sensitive fraud, streaming | Medium (automated retraining) |

| Champion-Challenger | Varies | Continuous improvement, A/B testing | Adaptive fraud prevention | High (requires constant testing) |

Top-tier tools like FraudNet aim for 99.9% risk scoring accuracy within 200ms, while platforms like DataVisor handle 30 billion events per year with latency under 100ms. The choice of model depends on your needs: startups may prioritize simplicity and speed, while enterprises managing millions of transactions require scalable, robust infrastructure.

Monitoring and Continuous Improvement

Deploying AI models is just the beginning - continuous monitoring is essential to keep fraud detection systems accurate and responsive. Fraud tactics evolve, and without active oversight, models can degrade over time. Effective monitoring should address three areas: Operational (latency, throughput), Data/Input (distribution shifts, schema drift), and Model Performance (accuracy, precision compared to ground truth).

A key metric for detecting drift is the Population Stability Index (PSI). A PSI below 0.1 suggests stability, 0.1–0.2 indicates potential issues, and anything above 0.2 signals significant drift requiring immediate action. Usman Khalid, CEO of Centric, highlights the importance of monitoring:

"A model can respond in 12 milliseconds with a 200 status code and still be producing catastrophically wrong predictions. Application monitoring tells you the model is responding. Model monitoring tells you whether it is responding correctly."

Weekly reviews of false positives, false negatives, and latency are essential. Automated retraining should be triggered when metrics fall below acceptable thresholds. Feedback from manual fraud reviews should also feed directly into the training pipeline to improve accuracy and adapt to new fraud tactics.

For example, Danske Bank reduced false positives by 50% after shifting from rule-based systems to AI-driven fraud detection. This improvement is critical, as research shows that 33% of customers won’t return to a business after experiencing a false decline.

Set tiered alerts to respond to drift effectively. For instance, a PSI above 0.25 should trigger a critical alert requiring action within 4 hours, while a PSI between 0.10–0.20 for less critical features can wait for the weekly review. Simulate attacks regularly using red team exercises to test your system's resilience. Also, monitor shifts in fraud score distributions, as these can signal data drift or the need for retraining.

Conclusion

Real-time AI models are reshaping fraud detection for SaaS businesses by offering instant, data-driven responses. With scoring speeds clocking in at 150–200 milliseconds, these systems intercept fraudulent transactions before any funds are lost. This proactive approach protects recurring revenue from issues like trial abuse, stolen credit cards, and account takeovers. On average, companies using these systems have reported a 38% drop in fraud and a 25% reduction in fraud-related losses within just six months.

But it’s not just about stopping fraud. Modern AI platforms also focus on reducing false positives - by as much as 70% - to ensure that genuine customers don’t face unnecessary hurdles during checkout. Alhamrani Universal highlights how this approach builds confidence:

"By understanding the behavioral patterns of every user, terminal and device, we can scale our business with confidence, respond instantly to emerging threats and reinforce the trust our customers, partners and regulators place in us."

This balance between fraud prevention and customer experience is crucial. Additionally, AI-powered systems drastically cut investigation times - by 40–80% - allowing risk teams to focus on genuinely suspicious cases. NCR Atleos illustrates this shift:

"With INETCO BullzAI... we've shifted from a reactive model to a proactive AI-driven strategy that enables us to detect and block increasingly sophisticated and unknown fraud patterns in real-time."

Unlike static systems, which struggle to keep up, AI-driven platforms continuously learn and adapt to emerging fraud patterns and zero-day attacks. Within the first 90 days of implementation, many platforms achieve a 45% reduction in false positives. By embracing real-time AI solutions, businesses can secure their operations, strengthen customer trust, and position themselves for long-term growth.

FAQs

What data do I need to run real-time fraud scoring?

To implement real-time fraud scoring, you’ll need data that allows AI models to assess transaction risks with precision. This data typically includes behavioral analytics, transaction details, user activity patterns, and anomaly detection insights.

Some of the key components are:

- Transaction Metadata: Information such as location, time, and amount helps paint a clearer picture of the transaction.

- User Behavior: Patterns like login frequency, browsing habits, and purchase history can signal unusual activity.

- Device Information: Data about the device used, such as IP address, operating system, and browser, can help identify potential red flags.

- Historical Fraud Patterns: Past fraud data provides the system with a baseline to detect suspicious activity.

By combining these elements, the system can evaluate risks in milliseconds, ensuring quick and accurate fraud detection.

How can I reduce false declines without increasing fraud?

To reduce false declines without compromising fraud prevention, SaaS platforms can leverage AI-powered tools that analyze transactions in real-time. These tools help distinguish between legitimate users and potential fraudsters.

Key strategies include:

- Dynamic fraud controls: These adjust based on the level of risk detected during a transaction.

- Multi-layered verification: Additional checks are triggered when suspicious activity is identified, adding an extra layer of security.

- Behavioral monitoring: Continuous tracking of user behavior patterns helps flag unusual activities while allowing genuine transactions to proceed smoothly.

By combining these methods, platforms can strike a balance - approving legitimate transactions while effectively blocking fraudulent ones.

When should I use graph models instead of standard classifiers?

Graph models shine when it's crucial to understand the relationships and interactions between entities. Unlike traditional classifiers, these models focus on analyzing connections and uncovering patterns, which makes them particularly effective for tackling complex fraud schemes. For instance, they are excellent at mapping dynamic transaction networks and spotting coordinated fraudulent activities through techniques like link analysis and pattern recognition. If accurate, real-time fraud detection hinges on understanding how entities are connected, graph models are the way to go.